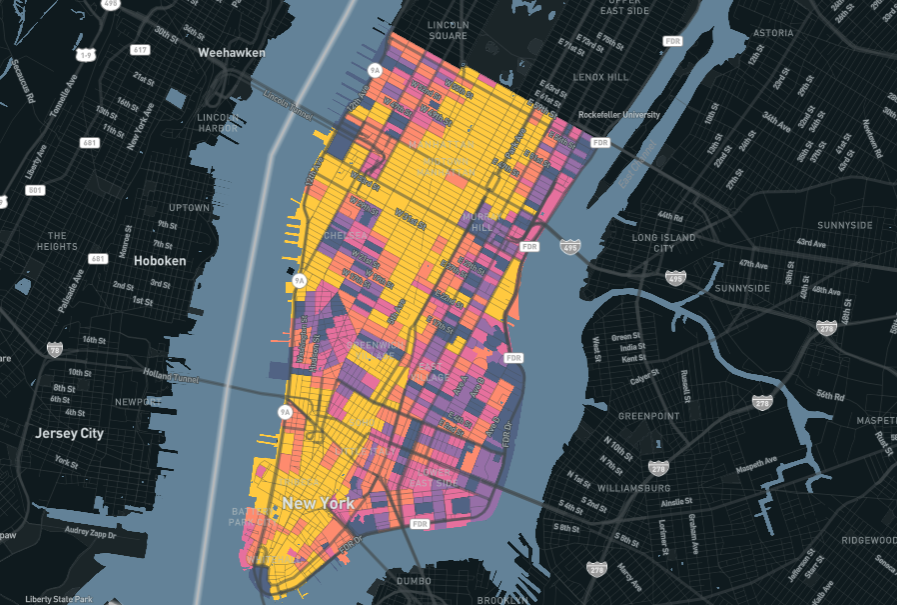

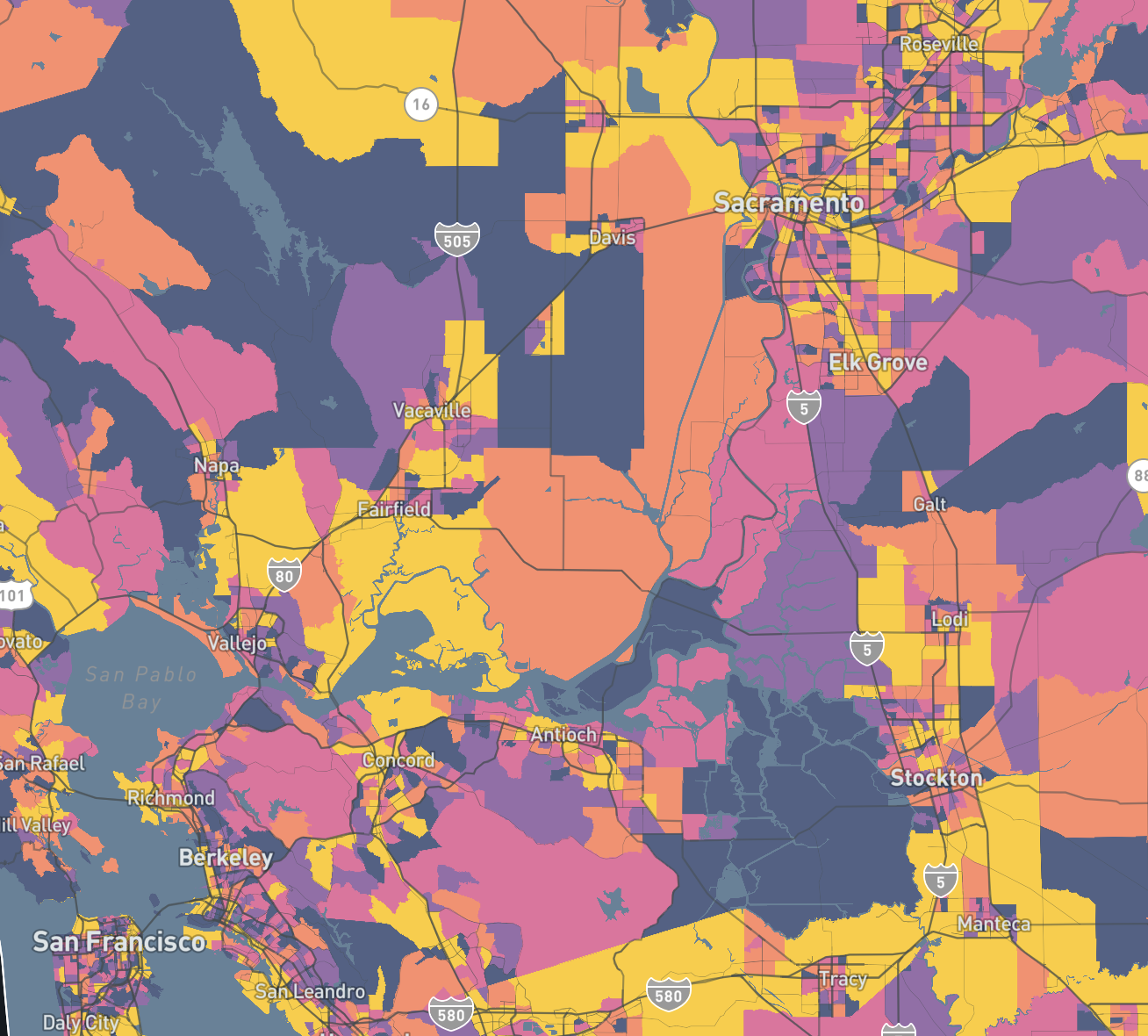

Replica helps agencies understand how people move through and interact with their cities. Our platform creates a privacy-preserving replica of real-world activity by combining mobility, demographic, land use, economic activity, safety, and network data into a unified model. This allows teams to analyze travel patterns, evaluate future scenarios, and prioritize infrastructure investments.

A key part of building this model is sourcing ground-truth datasets from real transportation systems. These datasets inform the model and provide a way to validate the results. Transit ridership data is one of the most important inputs.

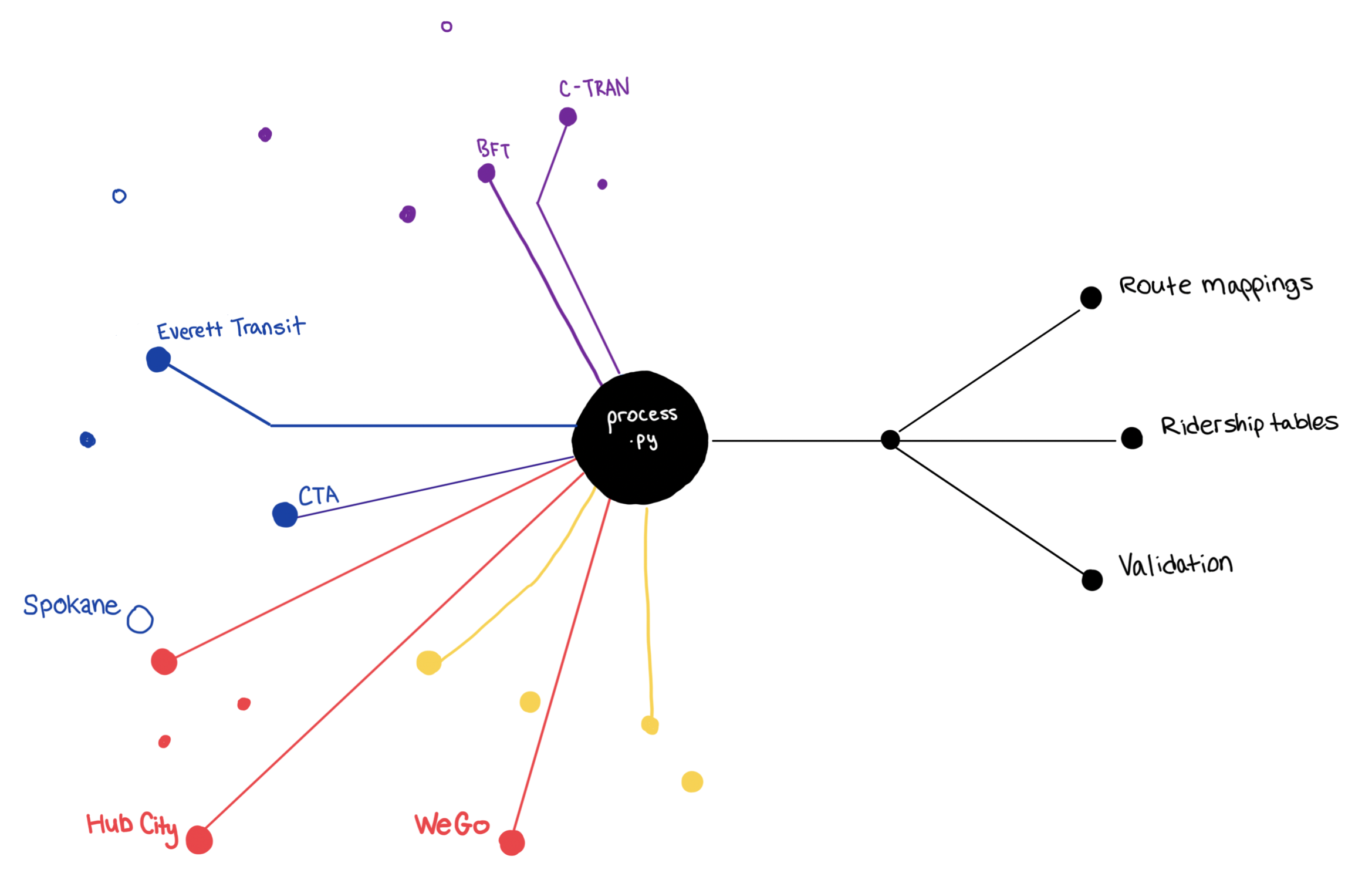

Every year, two members of Replica’s data science team process transit ridership data from more than 400 transit agencies across the United States.

Agencies publish transit data in a variety of formats, naming conventions, and levels of detail, reflecting different systems and reporting approaches. Before this data can be used in Replica’s modeling system, it must be cleaned, standardized, and validated.

We recently rebuilt the system that supports this work so our team can process hundreds of datasets efficiently while still giving attention to edge cases that require human judgment. This post explains how that system works.

Working with Transit Ridership Data

Transit ridership counts help anchor the model in how transit systems actually operate. We compare trips simulated by the model with agency-reported ridership volumes and adjust our outputs so the patterns align more closely with observed usage. This step matters because agencies rely on these outputs to understand demand, identify gaps in service, and evaluate how changes to infrastructure or policy may affect travel patterns. Calibrating the model with observed ridership data helps ensure these analyses reflect realistic transportation patterns.

When we begin work on a new seasonal release of our model, one of the first steps is collecting ground truth datasets from transportation systems around the country, including transit ridership data. Transit agencies publish ridership data in many different ways. Some agencies provide structured datasets through open data portals (to those agencies, we thank you). Others publish spreadsheets or reports. Route naming conventions and dataset structures also vary widely.

Before this data can be used in Replica’s modeling development process, it is prepared, standardized, and validated so it can be used consistently alongside other datasets. The normalization process includes four main steps:

- Clean and structure ridership data into a consistent format

- Match agency route names with General Transit Feed Specification (GTFS) route identifiers

- Validate totals against historical volumes and National Transit Database (NTD) reporting

- Load the normalized data into BigQuery, our cloud-based data warehouse

Once the raw data is cleaned, the rest of the pipeline is largely the same for every agency. The main variation lies in how each agency structures its original dataset. Recognizing this pattern helped shape how we redesigned the system.

The Old Workflow

Python notebooks are a natural fit for data cleaning. Ridership datasets vary greatly between agencies, and analysts need space to explore the data before deciding how to clean it.

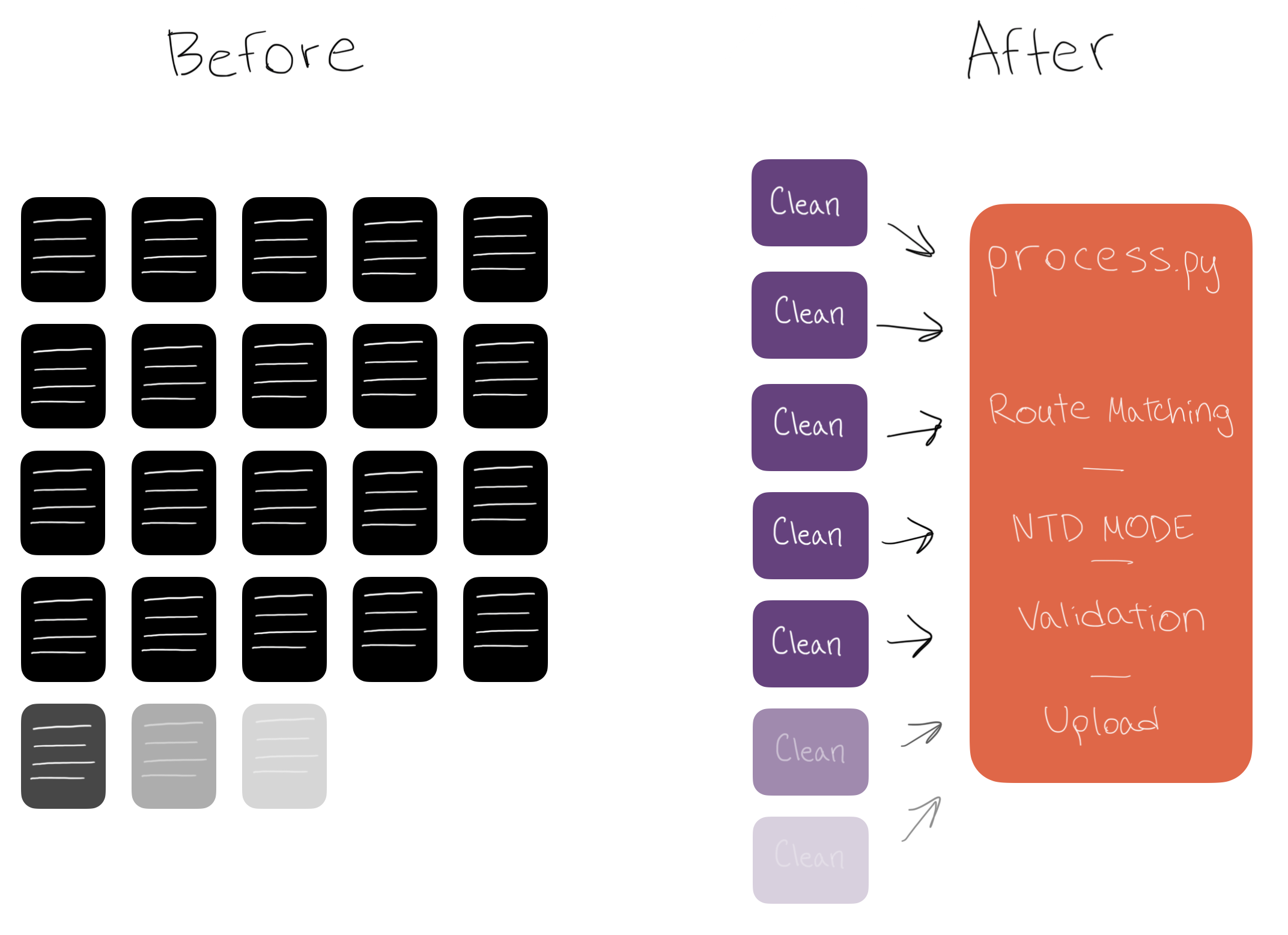

In previous seasons, our workflow relied on Jupyter notebooks. Each agency had its own notebook for each season, generated from a template using Papermill. The template walked the analyst through the full process: load the data, clean it, map the routes, validate totals, and upload the results.

This approach worked, but it came with tradeoffs. Each notebook was independent, which meant the same work had to be repeated for every agency, every season. Cleaning logic was rewritten. Route mapping was done manually. Improvements to the process were difficult to apply across all notebooks. Over time, it also became harder to separate what was standard from what was custom. Shared logic and agency-specific fixes lived side by side, making the system harder to maintain.

We needed a way to keep the flexibility analysts rely on while making the process more consistent and easier to scale.

Design Principles

We rebuilt the workflow around three principles.

Separate what changes from what does not

Most steps in the pipeline are consistent across agencies. The main variation is how each dataset needs to be cleaned. We split the system into two parts: a shared processing layer for common steps, and small, per-agency cleaner notebooks for custom logic. This makes it easier to improve the system without repeating work across hundreds of agencies.

Automate where it helps, keep people involved where it matters

Some steps benefit from automation, especially when patterns repeat across agencies. Others still require judgment. We introduced tools that suggest route matches and flag potential issues, while keeping analysts in control of final decisions. This allows the system to move faster without losing accuracy.

Build on previous work

While raw data varies across agencies, a given agency's data tends to stay consistent over time. We now reuse cleaner logic, route mappings, and validation baselines from previous seasons. This reduces manual work and improves consistency from one release to the next.

Moving Beyond Jupyter

We still wanted a notebook-style workflow, since it works well for exploring and cleaning unfamiliar datasets. But we needed more structure and better coordination between steps.

We adopted marimo, a reactive Python notebook that automatically re-runs downstream cells when inputs change. This removes a common issue in Jupyter workflows, where running cells out of order can lead to inconsistent results. For us, this reactivity is what makes the shared processing notebook possible: selecting a different agency from a dropdown triggers a full re-execution of every downstream cell, from cleaning through validation.

Marimo also supports running notebooks as applications using marimo run. In this mode, the underlying code is hidden and only inputs, outputs, and interactive elements are shown. This allowed us to build interactive tools for tasks like route matching and validation that analysts can use directly, while still being able to drop in the code when needed for debugging. This made it possible to introduce structure without changing how analysts prefer to work.

The Split-Notebook Architecture

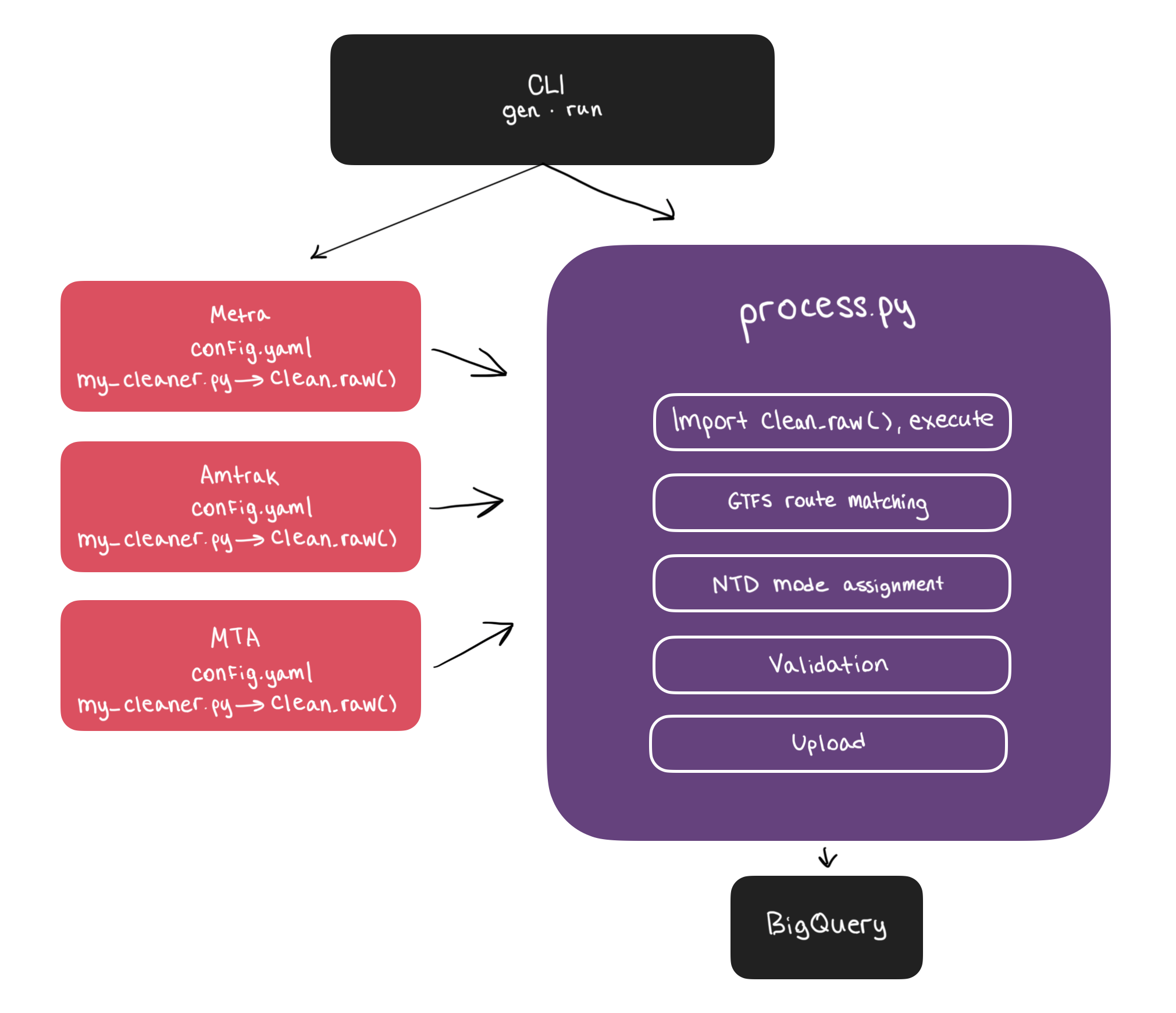

The system is built around two components that work together: cleaner notebooks and a shared processing notebook.

The Cleaner Notebook

Each agency has a small marimo notebook called my_cleaner.py. This is where (and only where) agency-specific complexity lives. The notebook loads the agency's raw ridership file, displays it for inspection, and exposes a clean_raw() function where an analyst writes the logic to wrangle that agency's data into our canonical schema.

For many agencies, this step is straightforward. We use an auto_clean function that handles common transformations such as lowercasing and standardizing column names, parsing dates across multiple formats, casting data types, and stripping commas or other formatting from numeric fields. In simple cases, clean_raw() can be as minimal as:

def clean_raw(gcs_path, data):

return auto_clean(data.pl)auto_clean returns a CleanedDataFrame wrapper that intentionally hides underlying DataFrame methods. This ensures auto_clean remains the final step inside clean_raw()and protects upstream logic if we later expand or modify the standard transformations.

For more complex datasets, analysts can explore transformations using polars or pandas directly in the notebook. Once the logic is worked out, they consolidate it into clean_raw(). Marimo treats this as a reusable function which can be imported by the shared processing notebook.

These cleaner notebooks persist across seasons. If an agency’s format remains consistent, the same clean_raw() logic can be used again without modification. Over time, as more recurring patterns are folded into auto_clean, fewer agencies require custom cleaning logic.

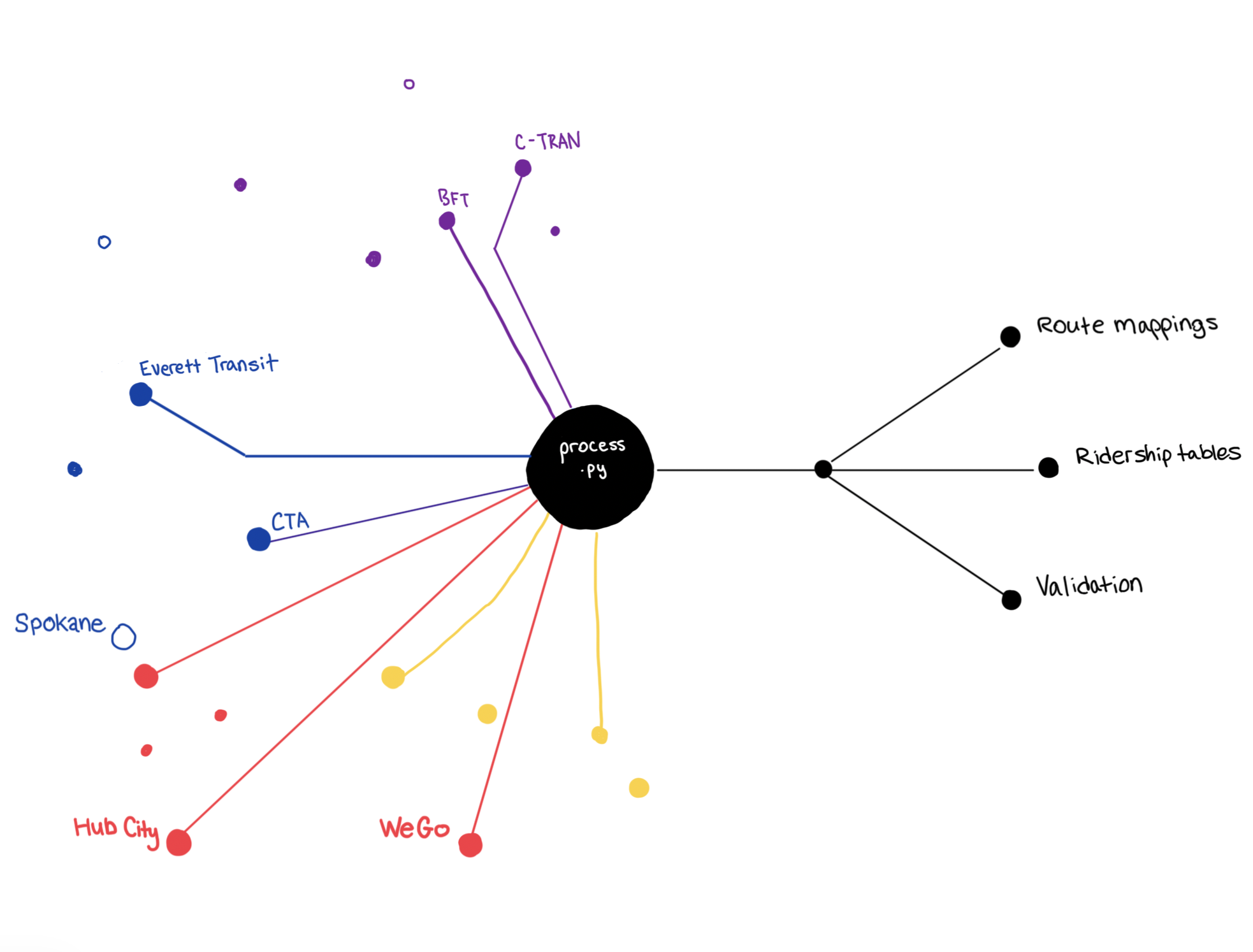

The Processing Notebook

The main processing notebook, process.py, handles everything downstream of cleaning. Each agency has a configuration file (config.yaml) that includes metadata such as the raw data location, GTFS route data, NTD_ID, and the relevant season dates. These configuration files are generated automatically by querying our data catalog, which reduces manual setup and keeps the process consistent.

AGENCY_NAME: Knoxville Area Transit

REGION_NAME: South Central

AGENCY_ID: '196'

RIDERSHIP_PATH: gs://transit_agency_sources_model/raw/2025_Q4/south_central/Knoxville_Area_Transit/south_central_Knoxville_Area_Transit.csv

GTFS_PATH: gs://city_data/south_central/2025_Q4/gtfs/metadata/south_central_route_metadata.csv

SEASON_START_DATE: '2025-09-01'

SEASON_END_DATE: '2025-09-30'

NTD_ID: '40002'

SEASON: 2025_Q4

COMPLETED: trueWhen an analyst selects an agency, the notebook loads that agency's config.yaml, dynamically imports its my_cleaner.py module, calls clean_raw(), and feeds the result into the downstream pipeline. All of the relevant metadata are pulled in automatically based on the configuration.

From that point on, every agency follows the same sequence of steps. Because marimo is reactive, selecting a different agency triggers a full re-execution of downstream cells. Analysts can process dozens of agencies in a single session without switching tabs, opening a new notebook, or re-running cells manually.

Marimo's table of contents is useful here as well. Each section of the processing notebook includes its validation status in the heading, so an analyst can glance at the sidebar and immediately see which steps passed and where problems occurred without scrolling through the full notebook.

Route Matching

One of the most time-consuming steps in the old workflow was matching agency-reported route names to GTFS identifiers. This step is important because GTFS provides the canonical route identifiers that our model uses, while agency ridership data often follows different naming conventions.

We now automate most of this process. If an agency has been processed before, we reuse existing route mappings. Since route naming tends to remain consistent, this resolves the majority of cases that require manual work. For routes that don't have a historical match, such as new routes, renamed routes, or agencies we're processing for the first time, we apply a matching approach that reflects how people interpret route names.

For example, a GTFS feed might list a route as "2", while the ridership data calls it "ROUTE 2: Blue Line (Kona-Hilo)". A naive string similarity approach like Levenshtein distance would score these as roughly 3% similar, since the matching characters are dwarfed by the additional text. A human, however, would immediately recognize them as the same route.

Our approach, inspired by Robin Linacre's work on address matching, works in a few steps:

- Tokenization: We split route names into tokens and remove common stopwords like "route", "line", and "bus".

"ROUTE 2: Blue Line (Kona-Hilo)"becomes["2", "blue", "kona", "hilo"]. - IDF weighting: We calculate Inverse Document Frequency (IDF) weights across the full set of route names from both GTFS and ridership data. Tokens that appear frequently receive lower weight, while more distinctive tokens receive higher weight.

- Numeric bonus: Route numbers are highly discriminative identifiers, so numeric tokens are given 3x additional weight.

- Scoring: For each GTFS route, we compute a weighted match score against ridership route names, prioritizing exact matches and falling back to fuzzy matching for non-numeric tokens.

Once matches are proposed, the processing notebook presents them as interactive widgets where analysts can confirm or reassign mappings. In the previous workflow, this required manually editing Python dictionaries. With marimo’s built-in dropdowns, it becomes a quick point-and-click step.

Validation

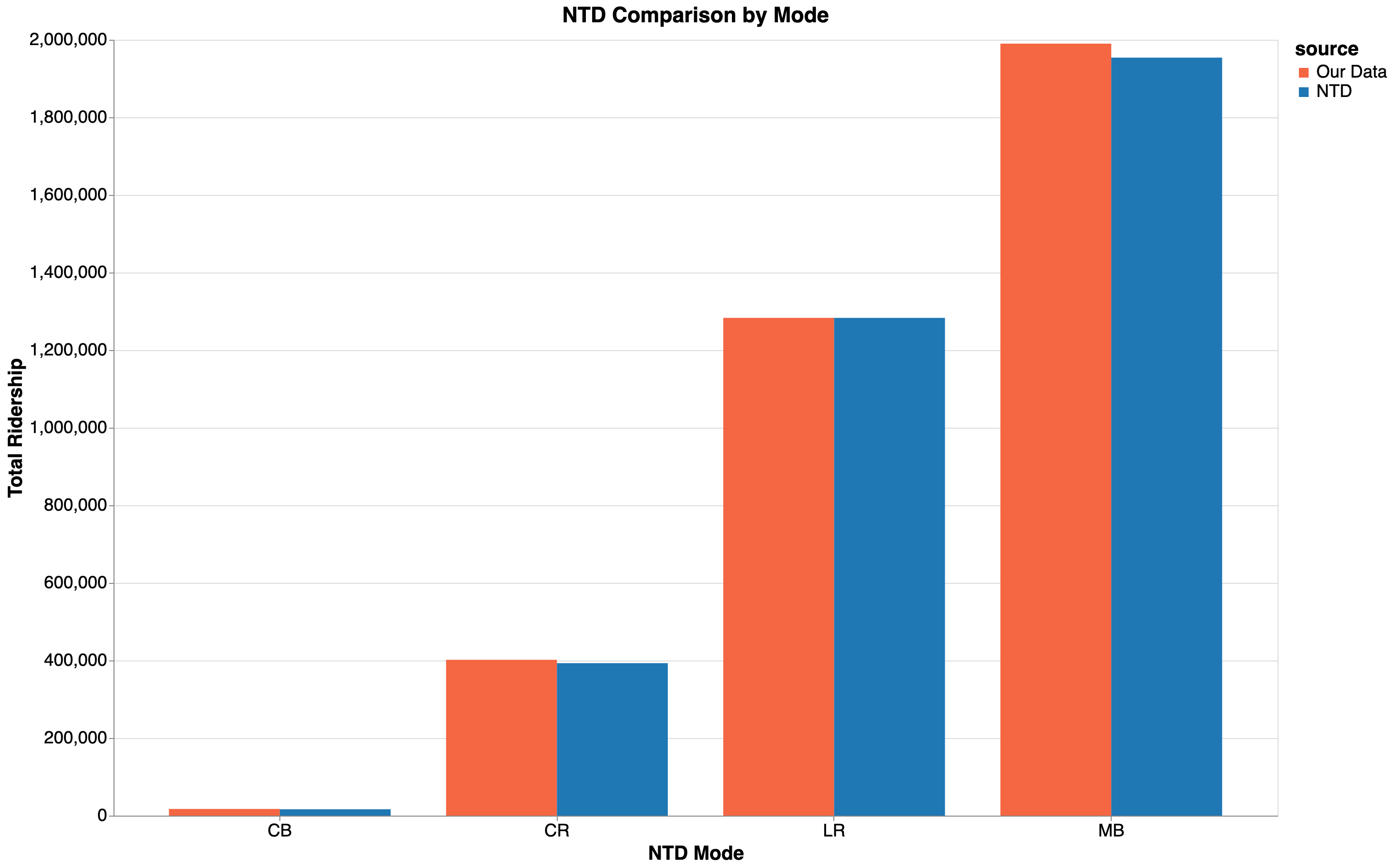

Before any data is finalized, the system runs a series of checks to identify potential issues. These include missing or duplicate records, gaps in date coverage, differences from NTD-reported totals, and unexpected changes from previous seasons.

When issues are detected, they are surfaced clearly so analysts can investigate and resolve them.

When NTD totals are available, the system compares our aggregated ridership against the NTD's reported figures by mode. If the variance exceeds a threshold, the system flags it. An optional scaling toggle lets analysts adjust ridership to match NTD totals when the discrepancy is understood (e.g., when an agency's data provide a sample of ridership, as opposed to a full census). Previous-season comparisons provide another sanity check, highlighting routes where ridership has changed dramatically, which may indicate a data quality issue or a genuine shift in service.

All of this happens reactively. If an analyst reassigns a route mapping or toggles NTD scaling, every downstream validation updates immediately.

What Changed in Practice

The new system changed how the team works day to day.

Less context-switching. Analysts now operate within a single workflow instead of managing hundreds of independent notebooks. They open one notebook, pick an agency, do the work, and repeat.

Faster iteration on edge cases. When we discover a common data quality issue mid-season, we add a validation check to the shared notebook once and it immediately applies to every subsequent agency. In the old system, that fix would have needed to be retrofitted across each notebook. The same applies to auto_clean: when we identify a common data pattern, we add a normalization rule once and every agency benefits.

Higher confidence, faster throughput. For many agencies, processing time has dropped significantly. Tasks that previously took around 30 minutes to complete now take closer to 5. Across hundreds of agencies, that difference adds up quickly.

Looking Ahead

The biggest impact of this system is not just speed, but how the process improves over time.

Each season adds to a growing foundation of cleaner logic, route mappings, and validation baselines. As that foundation grows, the amount of manual work continues to shrink.

We are now extending this approach to other types of ground truth data, including auto and active transportation datasets. As we process more data in a consistent way, we strengthen the foundation of our model and the analyses agencies rely on to make decisions about their communities.

.jpg)